How to build a winning forex strategy

Start by defining your trading goals and risk tolerance. Research various trading styles, such as day trading.

We build enterprise-grade tools that help you control costs and ship faster.

Self-hosted infrastructure built for teams that take ownership of their AI seriously.

Our products are designed to make your organization, faster, smarter, and more resilient.

Stop AI overspend before it happens. Pre-call enforcement, intelligent model routing, and verifiable compliance proofs. The only platform that prevents overspend instead of reporting it after the invoice.

Know which AI regulations apply to your systems before they take effect. Continuous monitoring of 25+ global regulatory sources. Cryptographically signed compliance attestations. Audit-ready out of the box.

Shred PRDs to Jira stories in seconds, and get your team building. Real-time code quality, collision detection, and design-to-implementation drift catching. Built for engineering teams where AI now writes most of the code.

Every other AI cost tool is a rearview mirror. Centinel is a brake pedal.

Your prompts, your usage patterns, your compliance evidence, all stay on your infrastructure. The only outbound traffic is license validation. Air-gap deployments are a first-class feature, not a contractual concession.

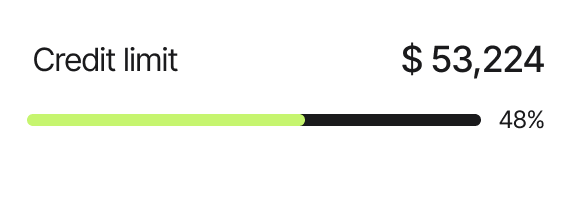

No per-token charges. No per-seat surprises. Just flat license fees. Your governance tools should not become their own cost problem as you scale.

Designed to integrate where it matters. Centinel and Guardian share signal as a unified governance layer. HyperPace works alongside as a separate operational tool for engineering teams. We don't force integration where it doesn't serve you — but where it does, it's deep and intentional.

Designed to integrate where it matters. Centinel and Guardian share signal as a unified governance layer. HyperPace works alongside as a separate operational tool for engineering teams. We don't force integration where it doesn't serve you — but where it does, it's deep and intentional.

Dire Wulf was founded by Nicholas Babb, who spent two decades designing enterprise experiences before watching firsthand what happens when AI gets deployed without the right operational scaffolding. After evaluating the available tooling and finding it expensive, fragmented, and customer-hostile, he started building what should have existed.

Spinning Centinel up is just the tip of the iceberg. We have created a deep knowledgebase your team can leverage to get off the ground in minutes.

Start by defining your trading goals and risk tolerance. Research various trading styles, such as day trading.

Start by defining your trading goals and risk tolerance. Research various trading styles, such as day trading.

Introduction Lorem ipsum dolor sit amet consectetur. Diam consectetur suspendisse dolor quam consectetur amet enim. Adipiscing tortor pretium pellentesque fames...

Every product ships with a free tier. No credit card. No sales call required.